Neural network reads your thoughts on watched video

A group of Russian scientists trained artificial intelligence to recreate pictures from videos that people watch. For this, they used data on electrical brain activity and perform experimentation with Neural network.

Scientists from the Neurorobotics Laboratory at MIPT and the Neurobotics research organization taught AI to use the data from an electroencephalogram. It was recorded from the surface of the head to recreate human-viewed videos.

As part of the experiment, people were shown different categories of video (waterfall, abstraction, faces, etc.), while recording brain activity data using EEG. Already at this stage, it became clear that the wave activity spectra are noticeably different. That is, the brain reacts differently to different pictures in real time.

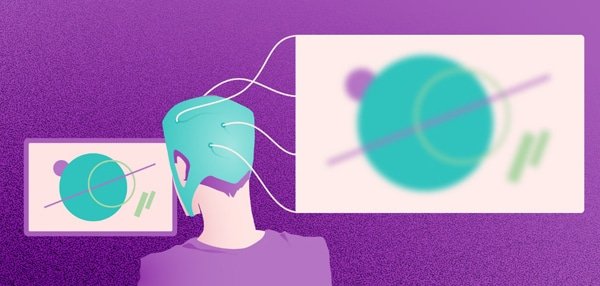

The second part of the experiment involved the development of two neural networks. The task of the first was to create arbitrary pictures from the “noise”. Another neural network generated a similar “noise” from an electroencephalogram. As a result of combining the work of two neural networks, scientists were able to obtain frames recorded by the EEG signal.

After that, the initial part of the experiment was repeated and the result was excellent. In 210 cases out of 234 AIs successfully classified each video.The system created realistic images of what people saw. Of course, there are no details on these pictures and human faces look distorted beyond recognition. In spite of that the main themes of the images, colors and shapes are conveyed quite accurately.

Currently, all existing technologies for the recognition of images using brain activity use the data of functional magnetic resonance therapy or analysis of a signal coming directly from neurons. Previously it was believed that the EEG does not contain enough information even for partial reconstruction of the image but the results of the study refuted this.

One of the authors of the study, Grigory Rashkov, believes that the discovery may be the first step towards creating a real-time brain-computer interface that does not require implantation.

The development has a practical purpose.The algorithm is designed to rehabilitate people with memory loss and after strokes. The studies are carried out within the framework of the domestic scientific program “Assistive Technologies”, in which the “brain – computer” interface plays a key role.

The brain-computer interface. Image: MIPT

In fact, in the future the possibilities of the program are seen as quite wide. From controlling the exoskeleton of the hand and the electric carriage to the ability to literally turn on the coffee maker with the power of thought. So the distant fantastic future may well come tomorrow. However, to bring the reading of thoughts with the help of AI to perfection, it will take about 10 years.

While the Neural Network algorithm is being tested on volunteers

Now the task of the developers is to make the software adapt to the cognitive characteristics of each person and generate clearer images.

Shortlink:

Recent Comments